G3Di

G3Di is a realistic and challenging human interaction dataset for multiplayer gaming, containing synchronised colour, depth and skeleton data. The dataset was captured using a novel gamesourcing approach where the users were recorded whilst playing computer games.

This dataset contains 12 people split into 6 pairs. Each pair interacted through a gaming interface showcasing six sports: boxing, volleyball, football, table tennis, sprint and hurdles. The interactions can be collaborative or competitive depending on the specific sport and game mode. In this dataset volleyball was played collaboratively and the other sports in competitive mode. In most sports the interactions were explicit and can be decomposed by an action and counter action but in the sprint and hurdles the interactions were implicit, as the players competed with each other for the fastest time.

The actions for each sport are: boxing (right punch, left punch, defend), volleyball (serve, overhand hit, underhand hit, and jump hit), football (kick, block and save), table tennis (serve, forehand hit and backhand hit), sprint (run) and hurdles (run and jump) and the interactions between the players in Table 1. Most sequences contain multiple action classes in a controlled indoor environment with a fixed camera, a typical setup for gesture based gaming.

|

|

|

|

Formats

Due to the formats selected, it is possible to view all the recorded data and metadata without any special software tools. The three streams were recorded at 30fps in a mirrored view. The depth and colour images were stored as 640x480 PNG files and the skeleton data in XML files.

For each sequence we have recorded each frame as colour, raw depth and depth transformed to colour co-ordinates in PNG format (see Figure 1). The raw depth information contains the depth of each pixel in millimetres and was stored in 16-bit greyscale and the raw colour in 24-bit RGB. The depth information was also mapped to the colour coordinate space and stored in a 16-bit greyscale. The 16-bits of depth data contains 13 bits for depth data and 3 bits to identify the player. The player index can be used to segment the depth maps by user (see Figure 2).

|

|

|

|

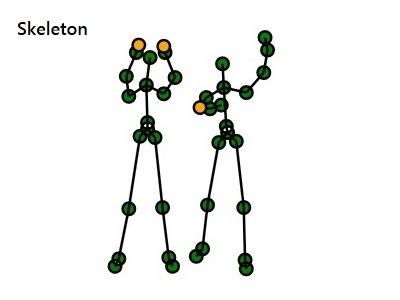

In addition we have recorded skeleton data for each frame in XML format. The root node in the XML file is an array of skeletons. Each skeleton contains the player’s position and pose. The pose comprises 20 joints as defined by Microsoft. The player and joint positions are given in X,Y and Z co-ordinates in meters. These positions are also mapped into the depth and colour co-ordinates spaces. The skeleton data includes a joint tracking state, displayed in Figure 3 as tracked (green), inferred (yellow) and not tracked (red).

|

Download

We make the data available to the researchers in computer vision community, the only requirement for using G3Di is to cite the following paper:

V. Bloom, V. Argyriou and D. Makris, "Hierarchical transfer learning for online recognition of compound actions", Computer Vision and Image Understanding, vol. 144, pp. 62-72, 2016.

On Table 1, you can click on the links to download the data for the corresponding gaming scenario. Each zip file contains 6 folders corresponding to 6 pairs of actors. Each of these folders contains 4 folders corresponding to colour, raw depth and transformed depth and skeleton files.

Table 1 : Interaction sequences

| Boxing | ||

| Action | Counter Action | Interaction |

| Right punch | Defend | Block |

| Right punch | Other | Attack |

| Right punch | Right punch | Attack |

| Right punch | Left punch | Attack |

| Left punch | Defend | Block |

| Left punch | Other | Attack |

| Left punch | Left punch | Attack |

| Table Tennis | ||

| Action | Counter Action | Interaction |

| Serve | Forehand hit | Rally |

| Serve | Backhand hit | Rally |

| Serve | Other | Miss |

| Forehand hit | Forehand hit | Rally |

| Forehand hit | Backhand hit | Rally |

| Forehand hit | Other | Miss |

| Backhand hit | Backhand hit | Rally |

| Backhand hit | Other | Miss |

| Volleyball | ||

| Action | Counter Action | Interaction |

| Underhand hit | Underhand hit | Set |

| Underhand hit | Overhand Hit | Set |

| Overhand Hit | Underhand hit | Set |

| Overhand Hit | Overhand Hit | Set |

| Jump Hit | Underhand Hit | Set |

| Jump Hit | Overhand Hit | Set |

| Underhand hit | Jump Hit | Attack |

| Overhand hit | Jump Hit | Attack |

| Jump hit | Jump Hit | Attack |

| Football | ||

| Action | Counter Action | Interaction |

| Kick | Kick | Block |

| Kick | Block | Block |

| Kick | Save | Block |

| Kick | Other | Attack |

Acknowledgements

We would like to thank the staff, students and interns of Kingston University for participating in the gamesourcing. We would also like to thank Kevin Bottero and Nicolas Ferrand from Ecole Nationale Suprieure d'Ingnieurs de CAEN (ENSICAEN) for their assistance in collecting and annotating the dataset.

For any queries related to the dataset please email Dr Victoria Bloom